Risk management with AAD and ML: an unreasonably effective combination

Working paper: on ssrn

GitHub: github.com/differential-machine-learning

TensorFlow notebook: on Google Colab

Differential machine learning (ML) is an extension of supervised learning, where ML models are trained on examples of not only inputs and labels but also differentials of labels to inputs, applicable in all situations where high quality first order derivatives wrt training inputs are available.

In the context of financial Derivatives and risk management, pathwise differentials are efficiently computed with automatic adjoint differentiation (AAD). Differential machine learning gives us unreasonably effective pricing and risk approximation. We can produce fast pricing analytics in models too complex for closed form solutions, extract the risk factors of complex transactions and trading books, and effectively compute risk management metrics like reports across a large number of scenarios, backtesting and simulation of hedge strategies, or regulations like XVA, CCR, FRTB or SIMM-MVA.

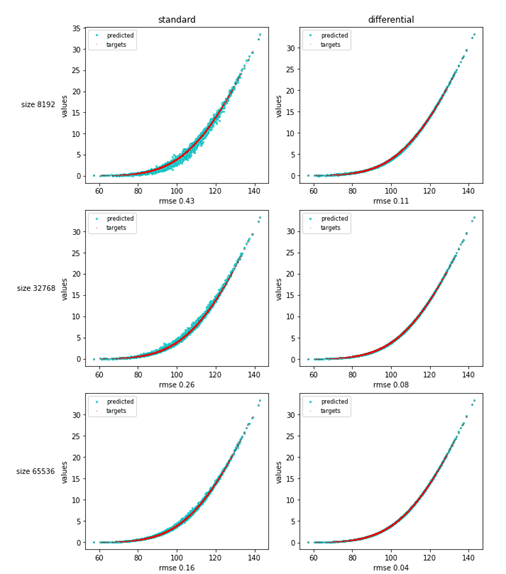

The article focuses on differential deep learning (DL), arguably the strongest application. Standard DL trains neural networks (NN) on punctual examples, whereas differential DL teaches them the shape of the target function, hence the performance. We included numerical examples, both idealized and real world.

In the online appendices, we apply differential learning to other ML models, like classic regression or principal component analysis (PCA), with equally remarkable results.

We posted a TensorFlow implementation on Google Colab, including examples from the paper and a discussion of practical implementation.